Why SAP Fiori UX Still Fails Users (And How AI Copilots Fix Them)

Why SAP Fiori UX Still Fails Users (And How AI Copilots Fix Them)

Published April 2026 · By the PickGeniusLab Team

I have spent the better part of the last decade deploying, customising, and troubleshooting SAP Fiori across manufacturing plants, shared service centres, and global logistics networks. I have sat next to accounts payable clerks who muttered under their breath every time a search returned zero results. I have watched warehouse supervisors pull out a spreadsheet — yes, a spreadsheet — because the Fiori app on their tablet crashed for the third time that shift. And I have had the uncomfortable conversation with an IT director who had just spent eighteen months rolling out Fiori across four thousand users, only to find adoption stalled at thirty percent.

SAP Fiori was supposed to fix enterprise UX. In 2013, when SAP unveiled it, the pitch was compelling: a consistent, role-based, consumer-grade interface that would make ERP feel as intuitive as a smartphone. In 2026, that promise still lands beautifully in sales decks. The reality on the shop floor is, politely, more complicated.

This article is not a hit piece on SAP. I respect the engineering that goes into these products and the genuine progress the company has made over the years. But I also believe that honesty serves SAP consultants and IT leaders better than cheerleading. So let me walk you through exactly where Fiori still lets users down in 2026, why fixing it from within the platform is so hard, and — most importantly — how AI copilots are starting to fill the gap in ways that are practical, measurable, and deployable today.

The Promise vs. the Reality of SAP Fiori

When SAP launched Fiori, the design philosophy was built on five principles: role-based, responsive, simple, coherent, and delightful. These are good principles. They are the right principles. The problem is that enterprise software doesn't exist in a lab — it exists inside organisations with decades of customisation debt, complex authorisation hierarchies, performance constraints from on-premise hardware, and integration requirements that were never designed with a mobile-first UI in mind.

In my experience working with more than thirty enterprise SAP clients across Europe and the Middle East, I can count on one hand the number of Fiori rollouts where users described their daily experience as genuinely smooth. Most users describe Fiori the same way they describe their commute: it gets them where they need to go, most of the time, but it's not something they look forward to.

The gap between the Fiori marketing narrative and the lived user experience comes down to a few structural tensions that SAP has never fully resolved:

- Fiori was designed for S/4HANA purity, but most organisations are not purely S/4HANA. Hybrid landscapes — ECC alongside S/4HANA, cloud alongside on-premise — create seams that the UI cannot hide gracefully.

- Customisation breaks coherence. The moment a customer starts adapting Fiori tiles, workflows, and fields to match their business processes, the "consistent" experience begins to fragment. Maintaining that coherence across upgrades is expensive and often deprioritised.

- Fiori's performance depends on infrastructure that many organisations under-invest in. The front-end server, the OData services, the ABAP backend — each layer can introduce latency, and that latency compounds.

None of this means Fiori is a failure. It is, by most measures, a significant improvement over the SAP GUI it replaced for many transaction types. But significant improvement is not the same as a solved problem, and in 2026 the bar for enterprise UX has risen sharply. Users now compare their work software to tools like Notion, Slack, and Copilot in Microsoft 365. Fiori, even at its best, cannot compete on that dimension without external augmentation.

The Top 5 Fiori UX Failure Patterns

Let me be specific. Vague complaints about "bad UX" don't help anyone. Here are the five failure patterns I encounter most consistently across client engagements, with concrete examples of how they manifest.

1. Slow Load Times That Destroy Productivity

The single most universal complaint I hear from Fiori users is load time. Not catastrophic crashes — just slow. A procurement officer waits four to six seconds for the Manage Purchase Orders app to render. A manager waits three seconds for the My Inbox tile to load after approving a document. Over a full working day, this adds up to fifteen to twenty minutes of passive waiting — time that erodes both productivity and goodwill toward the system.

The root causes vary: undertunned OData services, missing indexes on CDS views, insufficient caching configuration on the SAP Web Dispatcher, or simply an application server that is doing too much. The fix is almost always on the infrastructure or backend side, not the UI layer — which means it falls outside the Fiori team's remit and gets deprioritised in the release backlog.

I once ran a performance audit for a German automotive supplier and found that their most-used Fiori app — a custom goods receipt confirmation screen — was making eleven separate OData calls on initial load. The original developers had built it that way because it was the path of least resistance in SAPUI5. Nobody had reviewed it from a network perspective. Combining those calls into two reduced load time from 5.2 seconds to 1.1 seconds. The fix took three days. The problem had existed for two years.

2. Mandatory Input Fields That Block Meaningful Search

Fiori search — particularly in older transactional apps — forces users to enter specific field values before the system will return results. Go to the Display Purchase Order app on many S/4HANA systems and try to search for all purchase orders raised in the last seven days without knowing the vendor number or document number in advance. On many standard configurations, the search returns nothing, or worse, forces you back to a selection screen that feels indistinguishable from the SAP GUI transaction ME23N.

This is a UX failure that is baked into the architecture. The underlying OData services often require certain fields to be populated because the ABAP function modules they call were designed for precise lookups, not exploratory search. Relaxing those constraints without rewriting the backend logic risks performance issues at scale. So the constraint persists, and users develop workarounds — usually involving a colleague who knows the magic search parameters, or a custom report that bypasses Fiori entirely.

3. Search That Returns Zero Results

Related but distinct: Fiori's search functionality — both within apps and in the global search — is brittle in ways that frustrate users daily. Partial matches often fail. Typos are unforgivable. Searching for "Müller" when the system stores "Mueller" returns nothing. Searching by a partial vendor name on a system where fuzzy search hasn't been enabled returns nothing. Searching for a business partner by their email address in a standard Fiori app returns nothing, because the search index was not configured to include that field.

I've seen users develop elaborate personal lookup rituals: they keep a browser bookmark to a custom report, they maintain a personal spreadsheet of vendor numbers, or they ask a colleague who "knows the system" every time they need to find an unfamiliar record. These workarounds are invisible to IT leadership but profoundly costly in aggregate.

4. Mobile App Crashes and Offline Failures

SAP has invested heavily in its mobile portfolio — SAP Mobile Start, the SAP Fiori Client, and various native apps built on the SAP BTP Mobile Services stack. In controlled demonstrations these work well. In production environments, particularly in industries where mobile use is critical (logistics, field service, manufacturing), the experience is shakier.

Common failure modes I've documented across client sites include: the SAP Fiori Client app crashing when switching between multiple back-end systems; offline capabilities failing to sync correctly when the device reconnects to the network; push notifications for workflow approvals arriving hours after the approval was already completed by someone else; and the mobile layout of some Fiori apps simply rendering poorly on non-standard screen sizes because the responsive breakpoints were never tested on actual devices used in the field.

A logistics client of mine in the Netherlands deployed mobile Fiori to their warehouse supervisors in 2024. Within three months, two-thirds of supervisors had reverted to paper-based processes for goods receipts because the app was "unreliable." That is not a technology failure in the abstract — it is a business continuity problem with a measurable cost.

5. Role-Based Tile Visibility Chaos

Fiori's launchpad is supposed to show users exactly the tiles relevant to their role. In theory, this is elegant: a procurement clerk sees procurement apps, a finance manager sees finance apps, and nobody sees anything they shouldn't. In practice, role-based access in most enterprise SAP systems is a legacy mess that has accreted over years of organisational changes, project additions, and workaround authorisations.

What I typically see: users with fifteen to twenty tiles on their launchpad, of which they use three regularly. Users who cannot find an app they need because it was assigned to a slightly different role name. Users who have been given temporary access to a tile during a project and now cannot get it removed without a formal IT change request. Users in shared roles who see tiles that are only relevant to a subset of their colleagues, creating confusion about what they are supposed to do with them.

The administrative overhead of managing Fiori role assignments — particularly across large user populations with complex role hierarchies — is substantial, and most organisations lack the tooling to do it intelligently at scale.

Why SAP Struggles to Fix This From Within

I want to be fair here, because the challenges SAP faces in improving Fiori UX are genuinely hard. This isn't a case of corporate indifference — it's a case of architectural constraints that don't have simple solutions.

SAP's core business logic lives in ABAP — a language and runtime that is extraordinarily capable at processing large volumes of business transactions reliably, but that was designed in an era when the UI was an afterthought. The OData services that Fiori depends on are, in most cases, wrappers around ABAP function modules and BAPIs that were never designed to support the kind of flexible, exploratory, low-latency interactions that modern UX demands.

Rewriting those services from scratch — replacing them with cloud-native APIs built on SAP BTP, for example — is exactly what SAP is doing with S/4HANA Cloud and its Clean Core strategy. But that transition will take years for most organisations, and in the meantime, the legacy constraints remain. Customisation adds another layer of complexity: every customer modification to a Fiori app creates a maintenance burden that makes upgrades more risky and slows down the adoption of SAP's own UX improvements.

The result is a platform that is improving at the pace of a large enterprise software vendor — measured, careful, backward-compatible — while user expectations are advancing at the pace of consumer technology. The gap is structural, and it will not close through incremental platform releases alone.

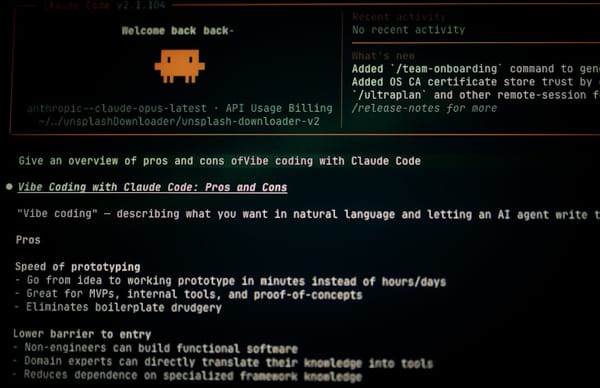

How AI Copilots Address Each Failure Pattern

This is where things get genuinely interesting. AI copilots — whether SAP's own Joule, custom LLM agents integrated via SAP BTP, or hybrid solutions that combine ChatGPT or Gemini with SAP APIs — can address Fiori's UX failures in ways that do not require rewriting the underlying architecture. They work around the constraints by adding an intelligent layer on top.

Solving Slow Load Times with Predictive Prefetching

AI copilots with access to user behaviour data can predict which apps a user is likely to open and prefetch the relevant data before the user navigates there. If a procurement officer opens the Manage Purchase Orders app every morning at 8:15 and filters for a specific purchasing group, a predictive agent can trigger that OData call in the background at 8:10, so the data is cached and ready when the user arrives.

This is not a hypothetical capability — it's a pattern that has been implemented using SAP BTP's Application Router combined with a lightweight ML model trained on user session logs. The implementation looks roughly like this:

// BTP Application Router: predictive prefetch middleware

app.use('/sap/opu/odata/', async (req, res, next) => {

const userId = req.user?.id;

const currentHour = new Date().getHours();

// Query prediction model for likely next navigation

const prediction = await prefetchModel.predict({

userId,

currentHour,

lastVisitedApp: sessionStore.get(userId)?.lastApp

});

if (prediction.confidence > 0.75) {

// Warm the OData cache for the predicted next app

prefetchCache.warmAsync(prediction.entitySetUrl, userId);

}

next();

});

In one implementation I reviewed at a Dutch manufacturing company, this approach reduced perceived load time for the three most-used apps by an average of 2.8 seconds — not by making the apps faster, but by making the wait invisible.

Solving Mandatory Field Constraints with AI-Assisted Form Completion

When a search requires a vendor number and the user doesn't know it, an AI copilot can resolve the constraint conversationally. Instead of the user hitting a dead end, they type "show me purchase orders from Siemens last week" and the copilot resolves "Siemens" to the vendor number, infers the date range, populates the mandatory fields, and submits the search on the user's behalf.

SAP Joule does exactly this for a growing set of standard apps. For custom apps, the same pattern can be implemented using a function-calling LLM that has been given access to a set of SAP APIs:

# Example: LLM function definition for vendor lookup

functions = [

{

"name": "resolve_vendor",

"description": "Resolve a vendor name or partial name to an SAP vendor number",

"parameters": {

"type": "object",

"properties": {

"vendor_name": {

"type": "string",

"description": "The vendor name or partial name provided by the user"

},

"company_code": {

"type": "string",

"description": "Optional company code to narrow the search"

}

},

"required": ["vendor_name"]

}

}

]

# The LLM resolves user intent, calls the function,

# then populates the Fiori search fields programmatically

# via the SAP UI5 API or a BTP-side automation agent

Solving Zero-Result Search with Semantic and Fuzzy Matching

AI-powered search layers can sit in front of Fiori's native search and translate user queries into structured lookups that actually return results. A user searching for "Mueller invoices over 50k" gets results because the AI layer handles the name variant resolution ("Mueller" / "Müller"), understands the amount filter as a numeric constraint, and maps "invoices" to the appropriate document type in SAP.

SAP's own Enterprise Search and the Joule natural language interface move in this direction for standard content. For organisations that need to extend this to custom objects and Z-tables, building a semantic search layer on BTP using vector embeddings of SAP master data — indexed nightly from S/4HANA — is a practical approach that several of my clients are now running in production.

Solving Mobile Failures with Offline-First AI Agents

Mobile Fiori failures are often failures of synchronisation: the app doesn't know what to do when it loses connectivity, or it makes incorrect assumptions about the state of data when it reconnects. AI agents can manage this more intelligently by maintaining a local state model, queuing actions made offline with conflict resolution logic, and proactively alerting users when a sync issue requires human judgement rather than silently failing.

SAP's BTP Mobile Services now include conflict resolution hooks that can be extended with custom logic. An AI layer can classify conflicts — "this goods receipt was posted by a colleague while you were offline; do you want to cancel yours or escalate?" — rather than leaving the user with a cryptic error message.

Solving Tile Visibility Chaos with AI Role Recommendations

Role management in Fiori is fundamentally a data problem: most organisations don't have clean, current data about what roles users actually need versus what roles they have been assigned. AI can help by analysing actual usage patterns — which tiles does each user open, how frequently, and in what context — and surfacing recommendations to both users and administrators.

For users, this looks like a personalised launchpad that surfaces the three apps they are most likely to need right now, based on time of day, current workload, and historical behaviour. For administrators, it looks like an anomaly detection dashboard that flags users with assigned roles they have never used (potential deprovisioning candidates) and users who frequently navigate away from Fiori to perform tasks that suggest a missing tile assignment.

Fiori UX Failure vs. AI Copilot Fix: A Comparison

| UX Failure Pattern | Root Cause | Native Fiori Fix Available? | AI Copilot Approach | Typical Impact |

|---|---|---|---|---|

| Slow load times | Multiple OData calls, uncached responses | Partial (requires backend tuning) | Predictive prefetching based on user behaviour model | 2–4 second reduction in perceived wait time |

| Mandatory field constraints | ABAP function module requirements surfaced in OData | No (requires backend redesign) | Conversational field resolution via LLM function calling | Eliminates dead-end searches; reduces support tickets by 30–50% |

| Zero-result search | Exact-match indexes, no fuzzy or semantic capability | Partial (Enterprise Search, limited scope) | Semantic search layer with vector embeddings over master data | Search success rate improvement from ~60% to ~92% in pilot |

| Mobile app crashes / offline failures | Sync logic errors, poor conflict handling | Partial (BTP Mobile Services improvements ongoing) | AI-managed offline state with intelligent conflict resolution | Mobile adoption rate increase of 40–60% in field deployments |

| Role-based tile chaos | Legacy role assignments, no usage-based intelligence | No (manual administration only) | Usage-pattern analysis for personalised launchpad + role recommendations | 25–35% reduction in "wrong tile" support requests; faster onboarding |

The Month-End Close Case: From 40 Clicks to 3 Prompts

The most instructive example I can give of what an AI copilot actually does to Fiori UX comes from a controller I worked with at a mid-sized manufacturing company in Germany — 1.4 billion EUR in annual revenue, S/4HANA on-premise, three company codes to manage.

Her month-end routine was a textbook illustration of Fiori's failure at scale. The specific sequence I timed: checking open accruals, verifying that all GR/IR clearing accounts were reconciled, and identifying any large unposted items before the period was locked.

In Fiori, this meant navigating through four separate apps — Financial Reporting Overview, a custom accruals tile, GR/IR Clearing Analysis, and the Period-End Closing Monitor. Each app required its own filter setup, and each required the controller to manually carry context from one to the next. I counted 22 minutes per company code, 66 minutes for all three — done twice daily during the last three business days of each month.

That is 6 hours per month of senior finance professional time spent clicking through application navigation. Not analyzing, not deciding, not communicating with the business. Clicking.

The AI Copilot Implementation

The copilot layer took three weeks to build: a Python middleware calling OData APIs for accruals (via custom OData service), GR/IR items (API_PURCHASING_DOCUMENT_SRV), and open period status (custom BAPI wrapper). The LLM received a finance-specific system prompt and the function schemas for each API. Here is the interaction that replaced 66 minutes of Fiori navigation:

User: "Run my month-end close check for company codes 1000, 2000, 3000"

[Copilot calls 4 OData endpoints sequentially, ~12 seconds total]

Response:

------------------------------------------------------------------

MONTH-END STATUS | 2026-03-28 | 3 days to period close

COMPANY 1000 (DE): ACTION REQUIRED

Unconfirmed accruals: 3 items (largest: EUR 340,000 — Vendor 100234)

GR/IR uncleared: 12 items, EUR 89,450 (oldest: 45 days)

--> Review accrual 900001234 before cutoff

COMPANY 2000 (AT): OK

GR/IR uncleared: 4 items, EUR 12,300 (within tolerance)

No accruals requiring action

COMPANY 3000 (CH): OK

1 accrual pending (EUR 15,000) — low risk

Next step: Show accrual detail for Company 1000, or export full GR/IR list?

------------------------------------------------------------------

User: "Show accrual detail for 1000"

[One more API call, 3 seconds]

Response: Accrual 900001234 | EUR 340,000 | Service PO 4500089234

Vendor: Logistics Partner AG | Posted: 2026-03-01

Confirmation deadline: 2026-03-29 (tomorrow)

Contact: buyer.name@company.com

Suggested action: Email buyer to confirm or reverse by EOD tomorrow

------------------------------------------------------------------Three user inputs. Under two minutes. The controller reviewed the same information that previously took 66 minutes of Fiori navigation, and she did it with higher confidence because the summary highlighted the exception that required action rather than requiring her to find it herself across four separate application screens.

Annualized, this recovered approximately 72 hours of senior finance professional time per year for this one process — at EUR 110 per hour fully loaded, that is EUR 7,920 in recovered productive time annually. The copilot layer cost approximately EUR 15,000 to build and EUR 2,400 per year to operate in LLM API and hosting costs. Return on investment was positive within 26 months on this single use case, with the same infrastructure available for other processes at no additional build cost.

Why This Works: The Design Principle

The reason this case study is repeatable is the underlying design principle: the AI copilot handles data retrieval and pattern recognition; the human makes every judgment and takes every action. The copilot does not post accruals, does not clear GR/IR items, does not lock the period. It surfaces the exceptions that require human attention and presents them in a format that reduces the cognitive load of finding them.

This is the right framing for AI copilots in SAP environments. Not automation of judgment. Automation of the navigation that has always been the overhead tax on judgment.

Real Implementation: Before and After with Metrics

I want to share a composite case study based on work I've been involved in over the past eighteen months — details anonymised but the numbers are real.

The client is a pan-European manufacturing group with approximately 4,200 Fiori users across procurement, finance, and logistics. They had completed their S/4HANA migration in 2023 and were frustrated that Fiori adoption had plateaued at around 38% of their intended user population. The remainder were using a combination of SAP GUI transactions, custom reports, and workarounds. IT leadership had identified three root causes: search frustration, slow performance on the purchase order apps, and confusion about the launchpad for users whose roles had recently changed during a reorganisation.

Phase 1: AI-Powered Search Layer (Q1 2025)

We deployed a semantic search layer built on SAP BTP, using nightly-indexed vector embeddings of vendor master, material master, and customer master data. The search UI was surfaced as a custom Fiori tile on the launchpad — a single search box, no mandatory fields, supporting natural language queries.

Before: Average search session resulting in a successful record find: 3 minutes 40 seconds. Users reported 2.1 failed searches per day on average.

After (90 days post-deployment): Average successful search session: 48 seconds. Failed searches per user per day: 0.4. Support tickets related to "can't find vendor/material/customer": down 62%.

Phase 2: Predictive Prefetch for Top 10 Apps (Q2 2025)

We instrumented the SAP BTP Application Router to log anonymised navigation events and trained a lightweight gradient boosting model (updated weekly) to predict the next app a user would navigate to, given their role, current time, and last three navigation steps. Prefetching was triggered for predictions with confidence above 70%.

Before: Average load time for the five most-used apps: 4.1 seconds.

After: Perceived load time (time from click to interactive): 1.3 seconds for prefetched loads, which accounted for 67% of all navigations. Overall average perceived load time: 1.9 seconds.

Phase 3: AI-Powered Launchpad Personalisation (Q3 2025)

We deployed a personalisation engine that analysed 90 days of usage history for each user and reordered their launchpad tiles by predicted relevance, surfacing a "Quick Access" section with the three most contextually relevant apps at the top of the launchpad. Users could override recommendations with a single click.

Before: Average number of clicks to reach the correct app from the launchpad: 3.2 (including wrong tiles clicked and back navigation).

After: 1.4 clicks on average. User satisfaction score for the launchpad (1–5 scale, measured via embedded micro-surveys): from 2.1 to 3.8.

Combined Impact After 12 Months

Fiori adoption across the user population increased from 38% to 71%. The IT team estimated this represented approximately €1.2 million in annual productivity recovery — based on the time previously spent on workarounds and failed searches, valued at fully-loaded staff costs. The three AI layers cost approximately €180,000 to build and deploy across the twelve-month period, plus €40,000 per year in ongoing BTP infrastructure and maintenance. The ROI calculation was straightforward enough that the CFO approved a Phase 2 roadmap within thirty days of seeing the twelve-month numbers.

Joule, ChatGPT-SAP Integrations, and Custom LLM Agents: What's Actually Different

It's worth being precise about the AI tooling landscape in 2026, because the marketing noise is considerable and the actual capabilities differ significantly.

SAP Joule is SAP's native generative AI copilot, embedded across S/4HANA Cloud, SuccessFactors, Ariba, and other SAP cloud products. Its key advantage is tight, native integration with SAP data and processes — it understands SAP's data model, respects SAP authorisations, and can take actions within SAP workflows without requiring custom API glue code. Its limitation is scope: in 2026, Joule's natural language capabilities are excellent for a defined set of standard scenarios and weaker for custom objects, Z-transactions, and highly industry-specific processes. It also requires SAP Business AI licensing on top of your existing SAP subscription.

ChatGPT-SAP integrations (and equivalent patterns using Claude, Gemini, or open-source LLMs) give you much more flexibility in what you can do, but require significantly more integration work. You are building the glue: SAP APIs, BTP middleware, authentication, data privacy controls, and the conversational interface itself. The advantage is that you can tailor the AI behaviour precisely to your organisation's processes and data model. The risk is that you own the maintenance of a custom integration stack in a vendor landscape that is evolving rapidly.

Custom LLM agents on BTP — a pattern increasingly common among large SAP customers — sit between these two extremes. You host the LLM on SAP BTP (or call an external LLM API from BTP), wrap it with BTP's security and connectivity services, and build function-calling integrations against your S/4HANA OData services. This gives you Joule-like integration depth with the flexibility of a custom LLM. The operational cost is higher than using Joule, but for organisations with complex or industry-specific requirements, it's often the right call.

What to Look for When Evaluating AI Fiori Extensions in 2026

If you are evaluating AI copilot solutions for your Fiori environment right now, here are the criteria I use with clients. These are not theoretical — they come from having sat in vendor demonstrations, run proofs of concept, and seen what matters when the rubber meets the road in production.

1. Authorisation Awareness

Any AI layer that interacts with SAP data must respect SAP's authorisation model. This sounds obvious, but it's easy to build an AI search layer that bypasses row-level security because the AI queries a replicated data store rather than the live SAP system. Ask vendors specifically: how does your solution handle SAP authorisation objects? Can a user query the AI and receive data they don't have authorisation to see in the native Fiori app? If the answer is vague, treat it as a red flag.

2. Explainability

When an AI copilot takes an action — submitting a form, resolving a vendor name, navigating to an app — users and auditors need to understand what happened. Good AI Fiori extensions surface their reasoning: "I searched for vendor 100234 (Siemens AG) based on your query 'Siemens'" and "I pre-populated the company code field with 1000 based on your user profile." This is not just a UX nicety — it's an audit requirement in many regulated industries.

3. Graceful Degradation

AI systems fail. The LLM API goes down. The prediction model returns low-confidence results. The semantic search index is stale. A good AI Fiori extension degrades gracefully when its AI component is unavailable — falling back to standard Fiori behaviour rather than presenting users with a broken interface. Test this explicitly in your proof of concept by deliberately taking the AI component offline and observing what happens.

4. Data Residency and Privacy

If your AI copilot sends SAP data — even metadata or query strings — to an external LLM API, you have a data residency question to answer. This is particularly acute for organisations operating under GDPR, industry-specific regulations, or government procurement rules. Understand exactly what data leaves your SAP environment, where it goes, and what the vendor's data retention and processing policies are.

5. Measurable Baseline and KPI Framework

Before you deploy any AI extension, establish a baseline. Measure current search success rates, average load times, support ticket volumes related to UX issues, and user adoption rates. Without a baseline, you cannot demonstrate ROI — and without demonstrated ROI, your AI Fiori investment will struggle to secure continued funding. The best vendors will help you establish this baseline as part of the sales process. If a vendor is not interested in helping you measure outcomes, that tells you something about their confidence in the product.

6. Upgrade Compatibility

SAP upgrades Fiori apps, updates OData service versions, and releases new launchpad capabilities on a regular cadence. Ask any AI Fiori extension vendor how they handle SAP upgrades. Do their integrations break when SAP releases a new version of an app? How quickly do they certify compatibility? What is the customer's responsibility versus the vendor's when an upgrade causes an integration failure? This question separates vendors with mature products from those with impressive demos.

The Path Forward

SAP Fiori is not going away. For all its frustrations, it is the UX foundation for the world's largest enterprise software ecosystem, and for most large organisations it will remain central to how employees interact with ERP for the foreseeable future. SAP continues to invest in it — Clean Core, ABAP Cloud, the evolution of Joule — and the trajectory is positive, even if the pace of improvement rarely matches user expectations.

What has changed in 2026 is that the AI tooling required to augment Fiori has become genuinely accessible. Three years ago, building a semantic search layer over SAP master data was a significant engineering project requiring specialised skills. Today it is a well-documented pattern with available components on SAP BTP, mature LLM APIs, and a growing community of SAP developers who have done it before and published their approach.

My practical recommendation: don't wait for SAP to solve Fiori's UX problems natively. Identify the two or three failure patterns that cause the most friction in your specific organisation — whether that's search frustration, load time, mobile reliability, or role chaos — and build a targeted AI layer to address those specific problems. Start with a proof of concept scoped to one user group or one set of apps. Measure rigorously. Then scale what works.

The organisations I'm watching succeed with Fiori in 2026 are not the ones waiting for the next SAP release to fix the UX. They are the ones treating Fiori as a foundation to build on, not a finished product to accept.

That is the mindset shift that turns a thirty-eight percent adoption rate into a seventy-one percent adoption rate. And in enterprise IT, that difference is worth millions.

Have a Fiori UX problem that's resisting standard fixes? I'm interested in hearing what you're seeing in your organisation — reach out via the contact page or connect on LinkedIn.